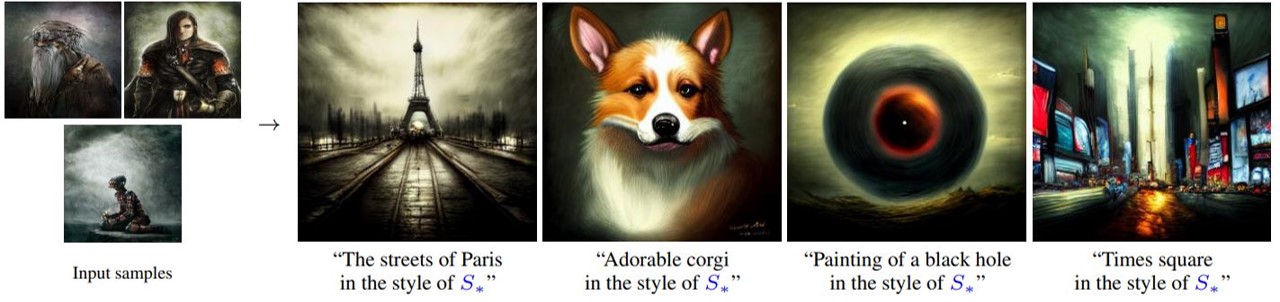

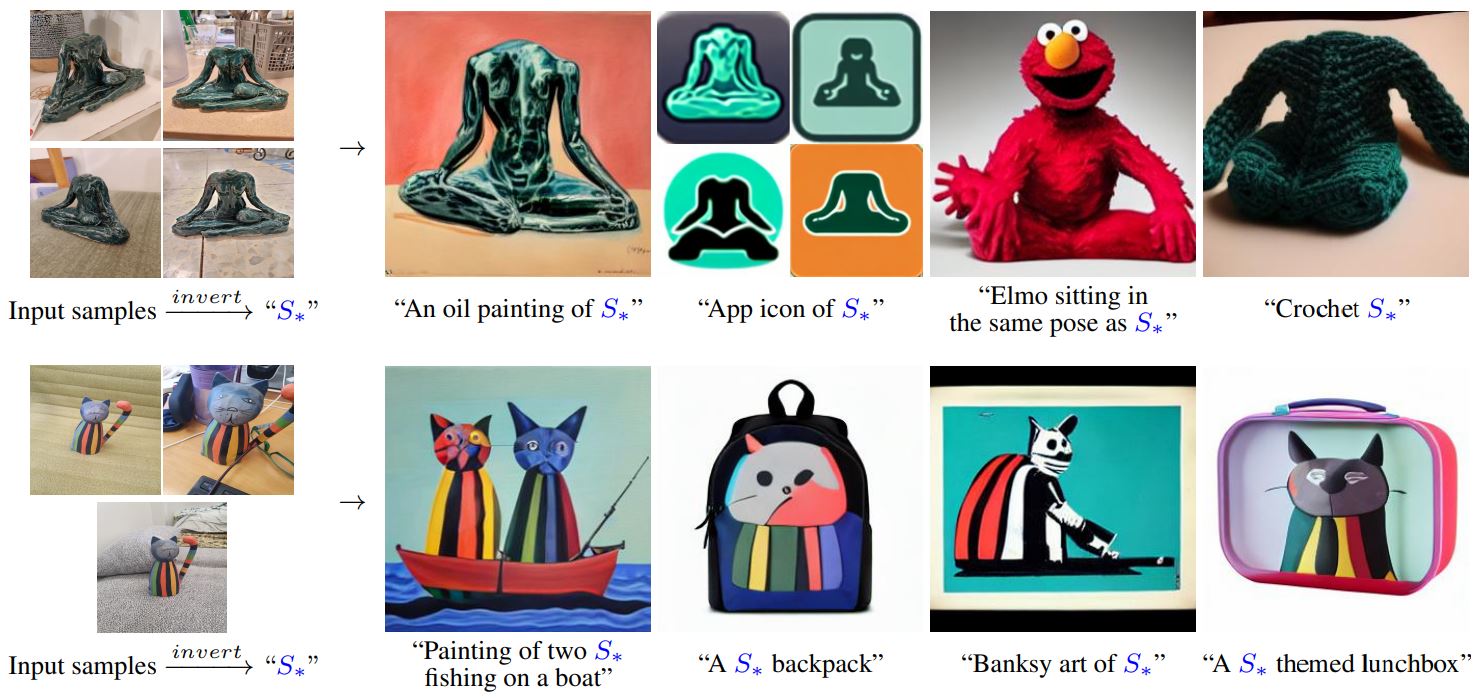

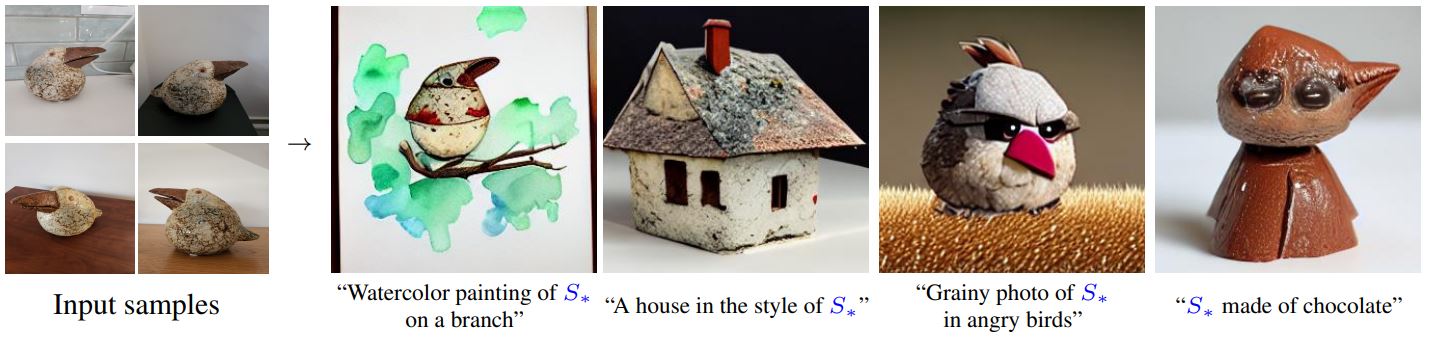

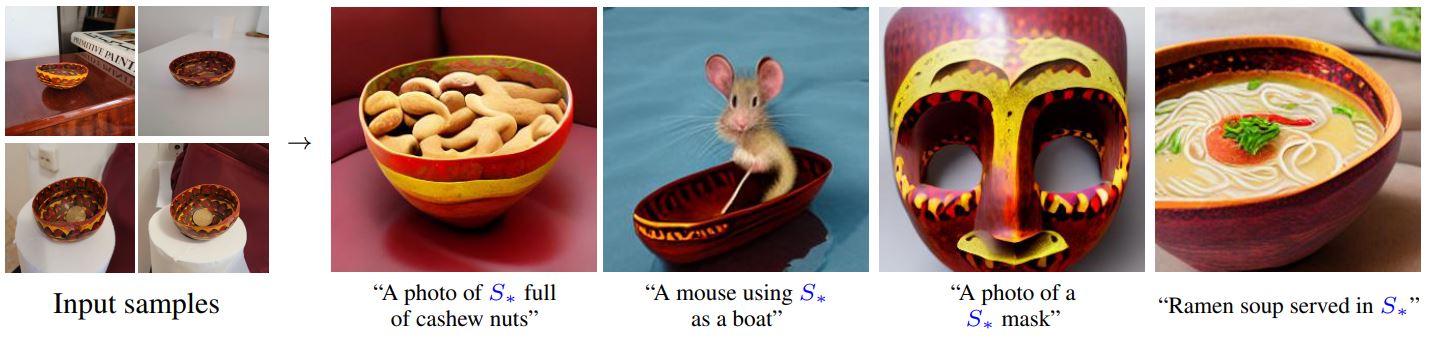

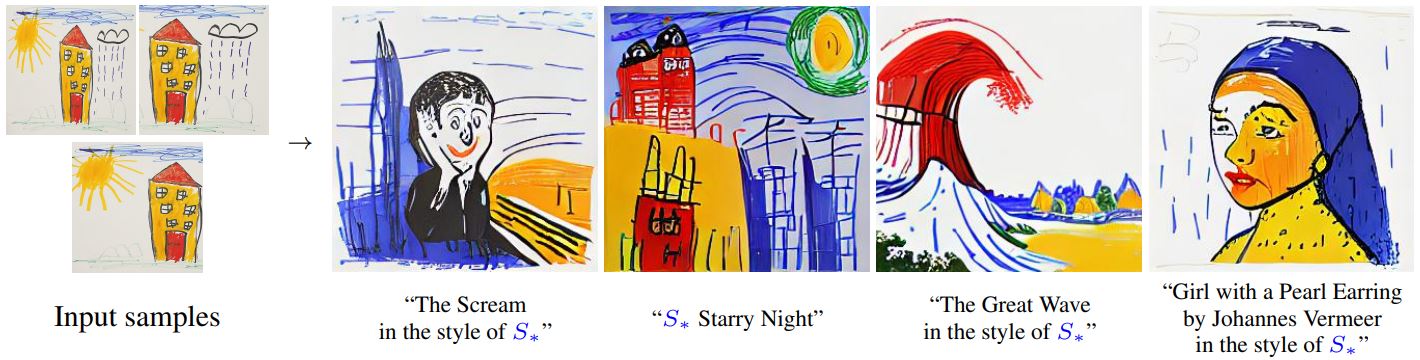

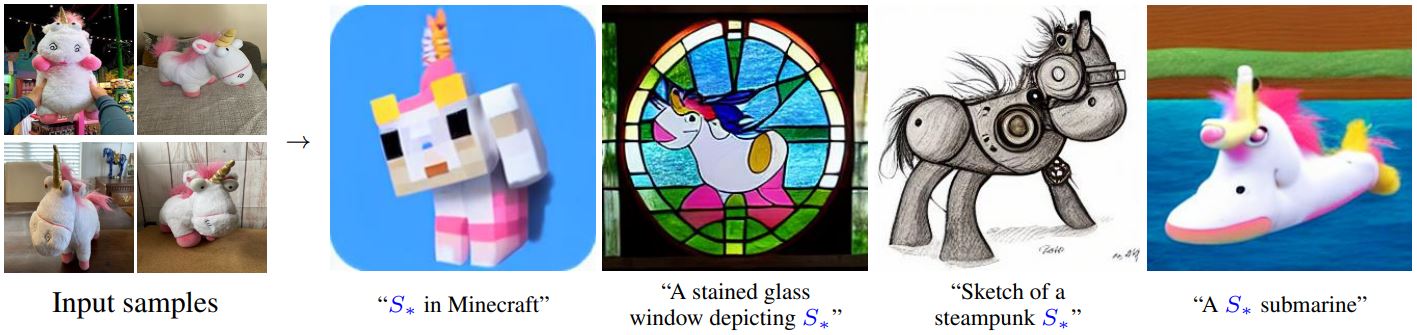

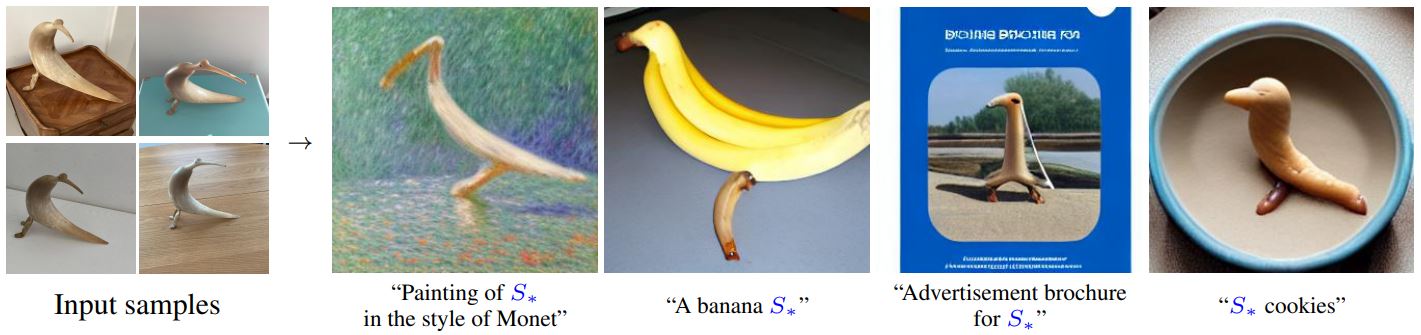

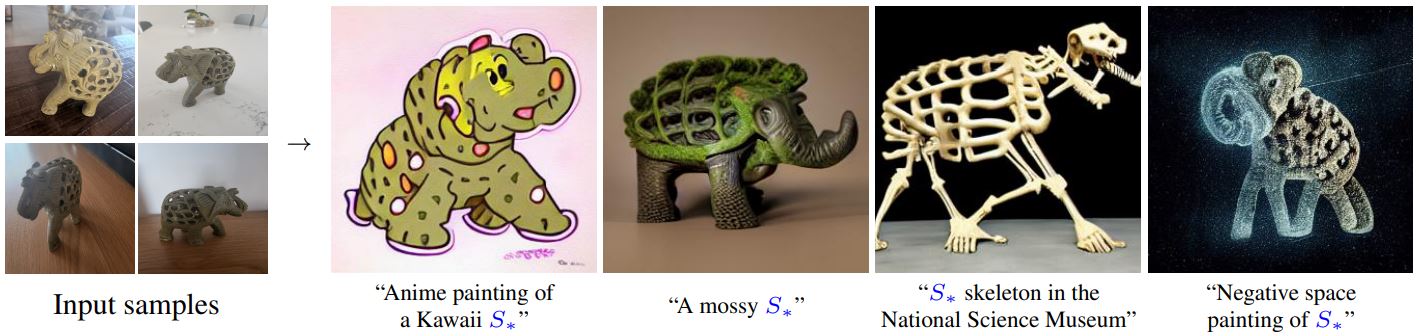

Text-to-image models offer unprecedented freedom to guide creation through natural language.

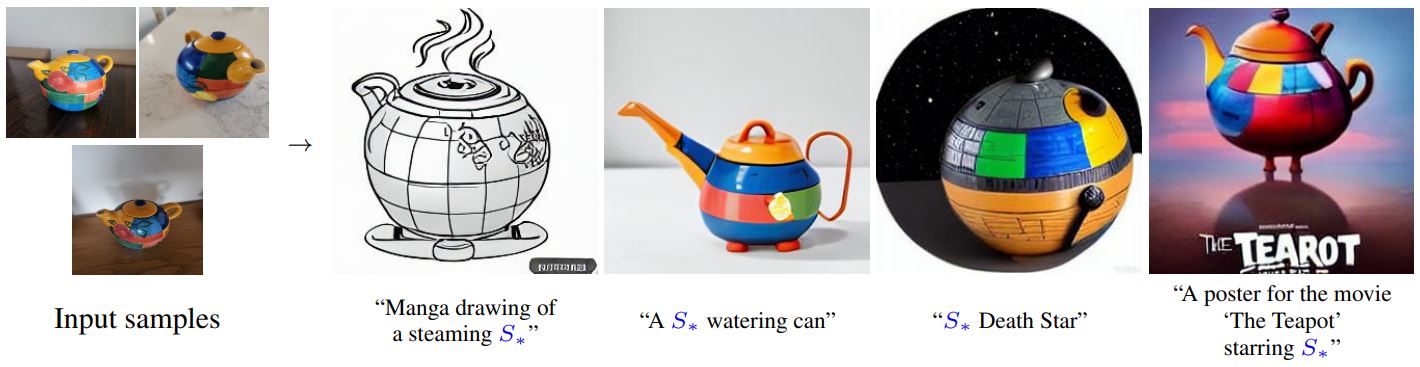

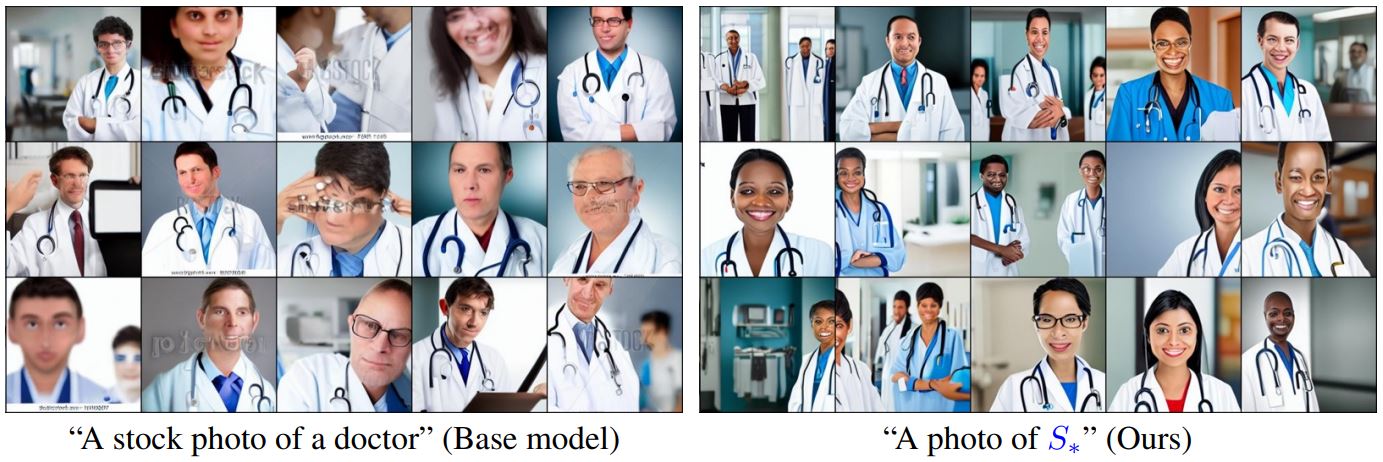

Yet, it is unclear how such freedom can be exercised to generate images of specific unique concepts, modify their appearance, or compose them in new roles and novel scenes.

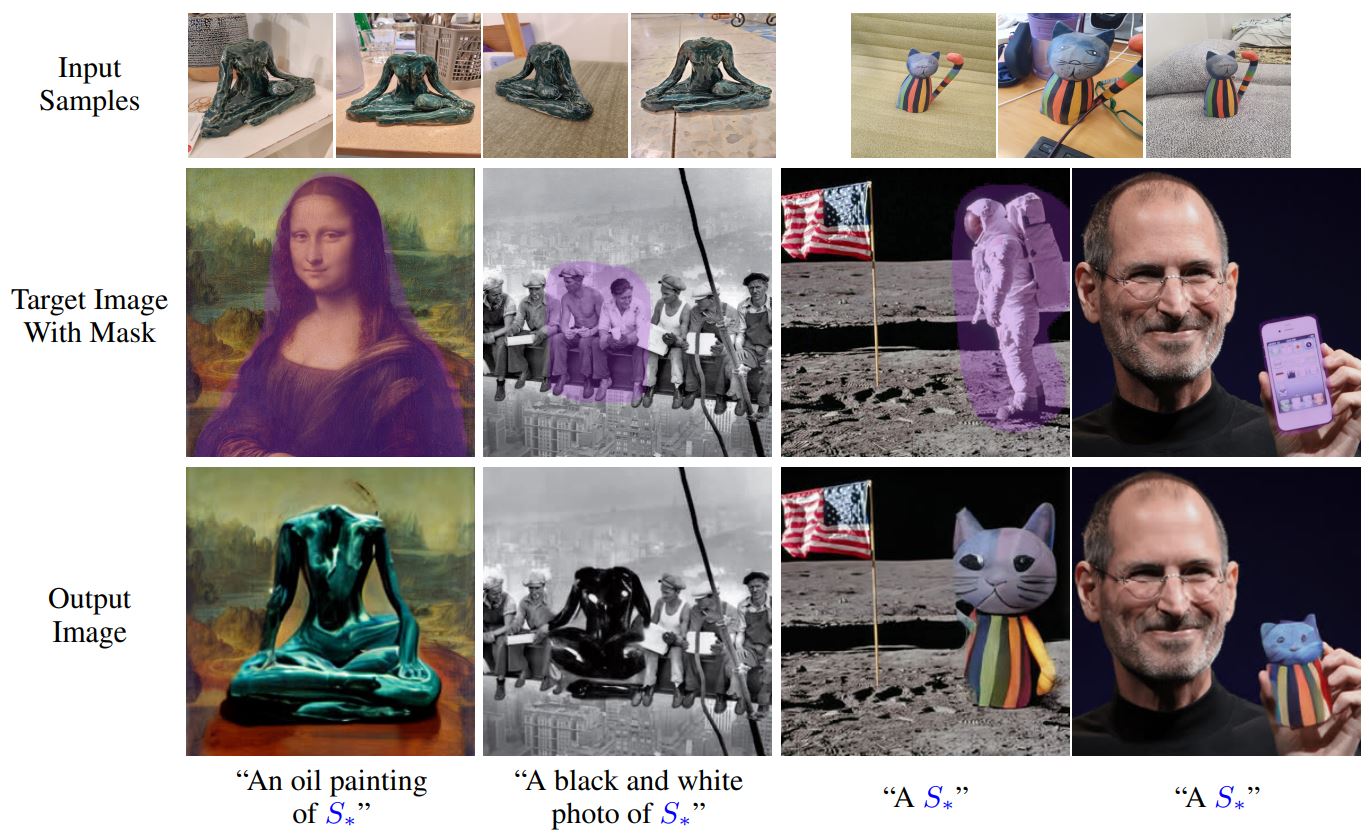

In other words, we ask: how can we use language-guided models to turn our cat into a painting, or imagine a new product based on our favorite toy?

Here we present a simple approach that allows such creative freedom.

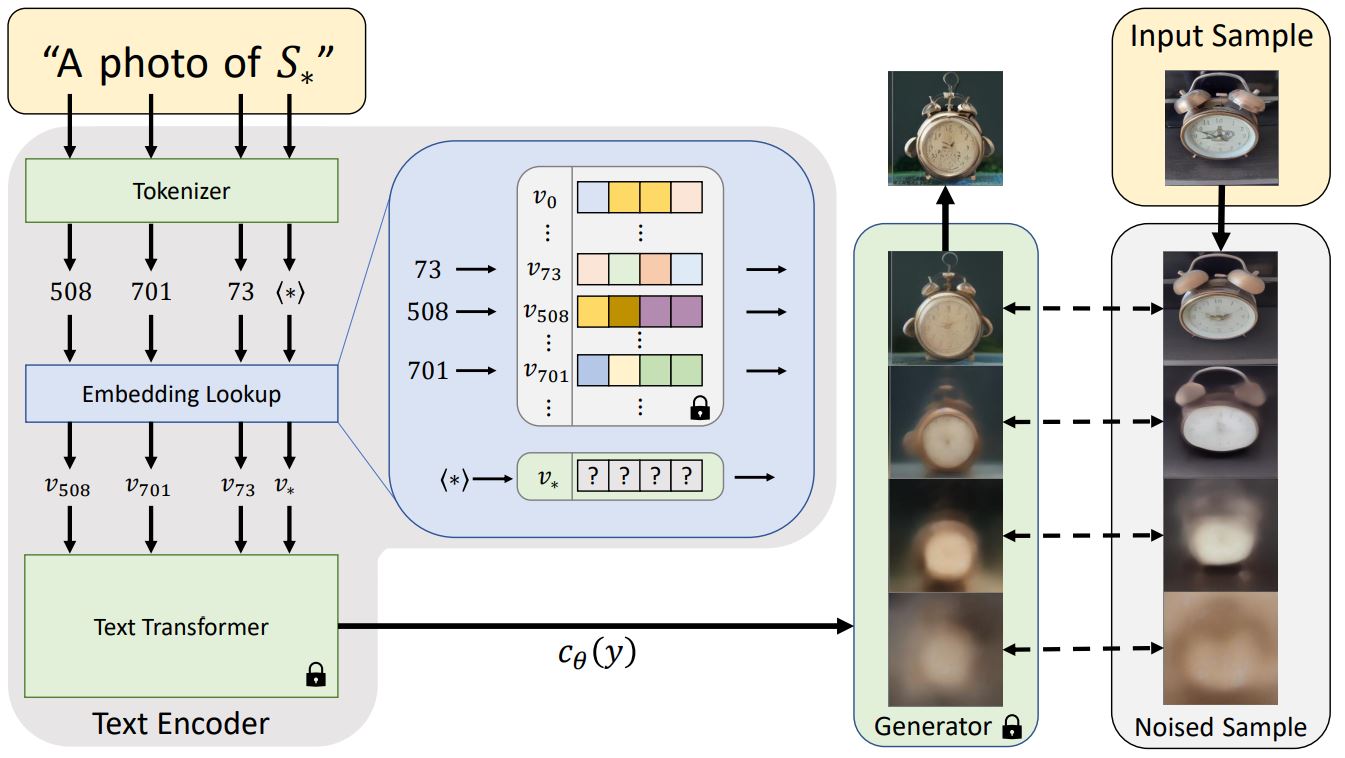

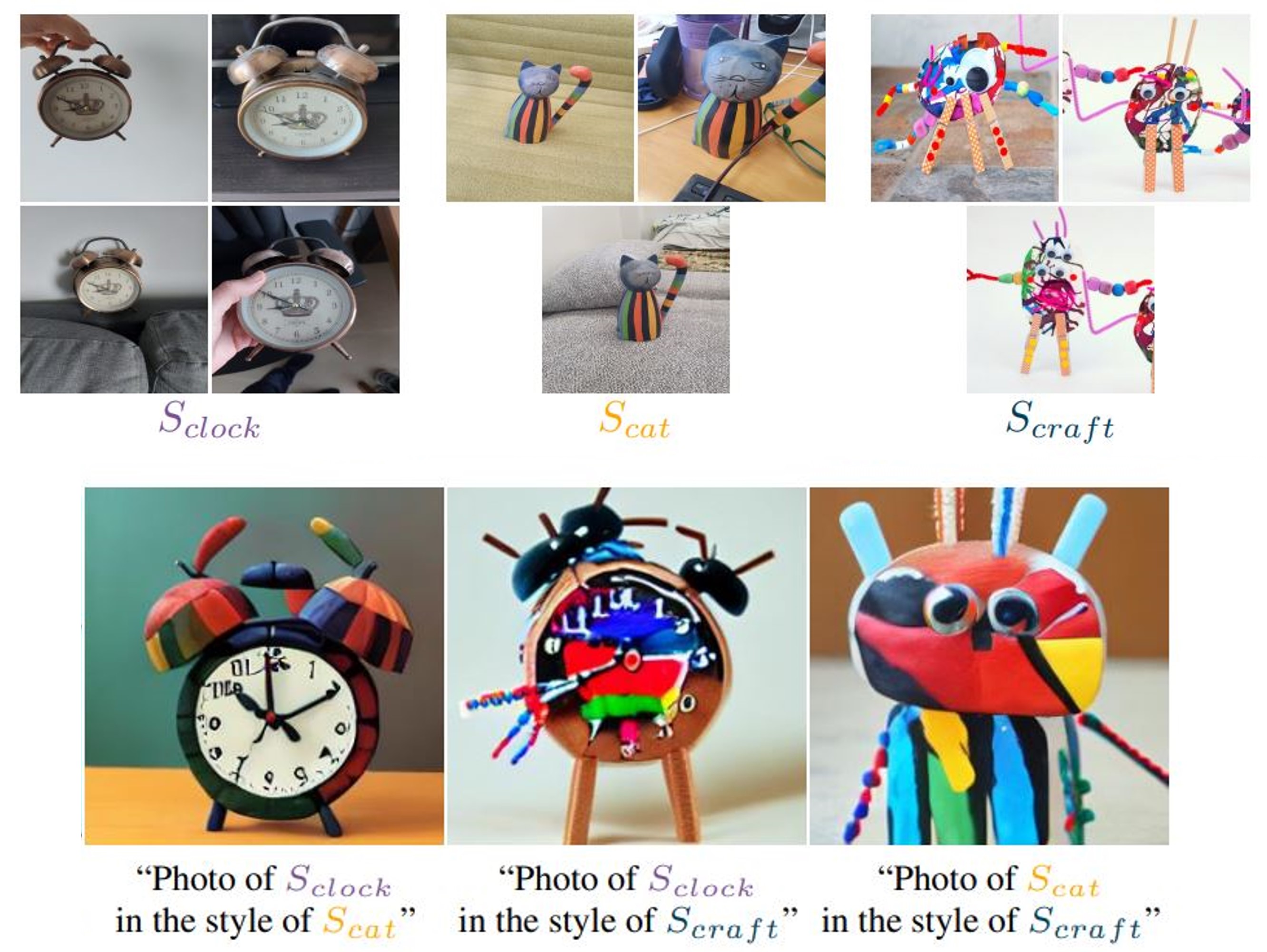

Using only 3-5 images of a user-provided concept, like an object or a style, we learn to represent it through new "words" in the embedding space of a frozen text-to-image model.

These "words" can be composed into natural language sentences, guiding personalized creation in an intuitive way.

Notably, we find evidence that a single word embedding is sufficient for capturing unique and varied concepts.

We compare our approach to a wide range of baselines, and demonstrate that it can more faithfully portray the concepts across a range of applications and tasks.